Do we need to worry about the page corruption ?

May be Yes!

As you may know - Data are stored and maintained by the SQL Server as Page(s). Right ?

A page can have up to 8 KB of data (8192 Bytes)

When I try to fetch the data from a table, The SQL Server database engine traverse through the pages what are allocated to the tables data and flush the appropriate records to the client (Management Studio or request end-point). But,

If something goes wrong at internal structure which the system may not be able to traverse through the pages we requested because of the logical inconsistent among the pages - May end with an Error called Logical Inconstant with I/O operations!

But, The page corruption occurs in the various levels as follows

Data Pages

Index Pages

Text/Image

File header page (Page ID 0)

Page 9 (Database Boot Page)

Allocation Pages (GAM, SGAM, PFS, IAM, BCM and DCM)

For Detailed info., on each page types:

https://docs.microsoft.com/en-us/sql/relational-databases/pages-and-extents-architecture-guide?view=sql-server-ver15

The page corruptions can be repaired automatically using Mirroring or Always-On Availability Group

But, Some of the page corruptions can't be repaired automatically by Mirroring or Always-On Availability Group either

Ok. Let's enable Mirroring, try with Failover and corrupt some of the Data Page(s) in Principal database and see whether the automatic page repair works!

let's begin...

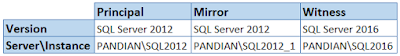

My Test Environments

I am going to use SQL Server 2012 Instance "PANDIAN\SQL2012" as Principal, SQL Server 2012 Instance "PANDIAN\SQL2012_1" as Mirror and SQL Server 2016 Instance "PANDIAN\SQL2016" as Witness.

Why I am using Same Version of SQL Server for Principal and Mirror ? let me tell you later

Let's change the SQL management studio session in "SQLCMD Mode" (Query menu --> SQLCMD Mode)

1. To Create a Sample database in Principal Instance

:CONNECT PANDIAN\SQL2012

CREATE DATABASE PrimaryDB1

GO

Connecting to PANDIAN\SQL2012...

Disconnecting connection from PANDIAN\SQL2012...

2. Creating sample table and records

:CONNECT PANDIAN\SQL2012

USE PrimaryDB1

GO

CREATE TABLE TABLE1(ID INT IDENTITY(1,1), NAMES VARCHAR(10))

GO

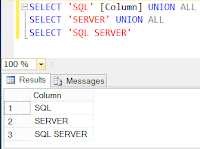

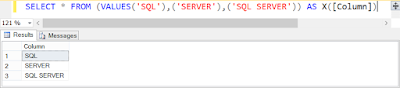

INSERT TABLE1(NAMES) VALUES('SQL'),('Server'),('SQL Server')

INSERT TABLE1(NAMES) VALUES('Mirroring'),('Failover'),('Testing')

INSERT TABLE1(NAMES) VALUES('Automatic'),('Page'),('Corruption'),('Manual')

GO

Connecting to PANDIAN\SQL2012...

(3 row(s) affected)

(3 row(s) affected)

(4 row(s) affected)

Disconnecting connection from PANDIAN\SQL2012...

3. Sample records from the Table

:CONNECT PANDIAN\SQL2012

USE PrimaryDB1

GO

SELECT * FROM TABLE1

4. Taking FULL Backup from Principal Instance

:CONNECT PANDIAN\SQL2012

BACKUP DATABASE [PrimaryDB1] TO DISK = N'\\PANDIAN\Personal\MirroringPath\PrimaryDB1.Bak'

WITH NOFORMAT, NOINIT, SKIP, NOREWIND, NOUNLOAD, STATS = 10

GO

The shared path should be accessed by Mirror Instance

5. Taking LOG Backup from Principal Instance

:CONNECT PANDIAN\SQL2012

BACKUP LOG [PrimaryDB1] TO DISK = N'\\PANDIAN\Personal\MirroringPath\PrimaryDB1.Trn'

WITH NOFORMAT, NOINIT, SKIP, NOREWIND, NOUNLOAD, STATS = 10

GO

6. Restore the FULL Backup in Mirror Instance

:CONNECT PANDIAN\SQL2012_1

USE [Master]

GO

RESTORE DATABASE [PrimaryDB1] FROM DISK = N'C:\Personal\MirroringPath\PrimaryDB1.Bak'

WITH FILE = 1,

MOVE N'PrimaryDB1' TO N'C:\Personal\DATA\PrimaryDB1.mdf',

MOVE N'PrimaryDB1_log' TO N'C:\Personal\LOG\PrimaryDB1_log.ldf',

NORECOVERY, NOUNLOAD, STATS = 5

GO

The restore should be in NORECOVERY state

7. Restore LOG Backup in Mirror Instance

:CONNECT PANDIAN\SQL2012_1

USE [Master]

GO

RESTORE LOG [PrimaryDB1] FROM DISK = N'C:\Personal\MirroringPath\PrimaryDB1.Trn'

WITH FILE = 1, NORECOVERY, NOUNLOAD, STATS = 10

GO

The LOG backup restore also should be in NORECOVERY state

8. Create ENDPOINT for the Principal Instance

:CONNECT PANDIAN\SQL2012

CREATE ENDPOINT Mirroring

AS TCP (LISTENER_PORT=5022)

FOR DATA_MIRRORING (ROLE=PARTNER,ENCRYPTION=REQUIRED ALGORITHM AES);

9. Give CONNECT Permission to the ENDPOINT for the service account in Principal Instance

:CONNECT PANDIAN\SQL2012

GRANT CONNECT ON ENDPOINT::Mirroring TO [PANDIAN\SQLServerBuddy]

Make sure the SQL Server runs under this service account

10. To make sure the ENDPOINT created

:CONNECT PANDIAN\SQL2012

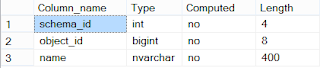

SELECT serverproperty('servername') [Servername], e.name [Endpoint], e.protocol_desc [Protocol], e.type_desc [Type], e.state_desc [State], e.role_desc [Role], e.encryption_algorithm_desc [ENCRYPTION ALGORITHM],ep.Port [Endpoint Port] FROM SYS.DATABASE_MIRRORING_ENDPOINTS e join SYS.TCP_ENDPOINTS ep ON (e.endpoint_id = ep.endpoint_id)

See, The ENDPOINT not started yet

11. Let me START the ENDPOINT

:CONNECT PANDIAN\SQL2012

ALTER ENDPOINT Mirroring STATE=STARTED;

12. To make sure the ENDPOINT started

:CONNECT PANDIAN\SQL2012

SELECT serverproperty('servername') [Servername], e.name [Endpoint], e.protocol_desc [Protocol], e.type_desc [Type], e.state_desc [State], e.role_desc [Role], e.encryption_algorithm_desc [ENCRYPTION ALGORITHM],ep.Port [Endpoint Port] FROM SYS.DATABASE_MIRRORING_ENDPOINTS e join SYS.TCP_ENDPOINTS ep ON (e.endpoint_id = ep.endpoint_id)

See, The ENDPOINT is started!

13. Create ENDPOINT for the Mirror Instance

May be Yes!

As you may know - Data are stored and maintained by the SQL Server as Page(s). Right ?

A page can have up to 8 KB of data (8192 Bytes)

When I try to fetch the data from a table, The SQL Server database engine traverse through the pages what are allocated to the tables data and flush the appropriate records to the client (Management Studio or request end-point). But,

If something goes wrong at internal structure which the system may not be able to traverse through the pages we requested because of the logical inconsistent among the pages - May end with an Error called Logical Inconstant with I/O operations!

But, The page corruption occurs in the various levels as follows

Data Pages

Index Pages

Text/Image

File header page (Page ID 0)

Page 9 (Database Boot Page)

Allocation Pages (GAM, SGAM, PFS, IAM, BCM and DCM)

For Detailed info., on each page types:

https://docs.microsoft.com/en-us/sql/relational-databases/pages-and-extents-architecture-guide?view=sql-server-ver15

The page corruptions can be repaired automatically using Mirroring or Always-On Availability Group

But, Some of the page corruptions can't be repaired automatically by Mirroring or Always-On Availability Group either

Ok. Let's enable Mirroring, try with Failover and corrupt some of the Data Page(s) in Principal database and see whether the automatic page repair works!

let's begin...

My Test Environments

I am going to use SQL Server 2012 Instance "PANDIAN\SQL2012" as Principal, SQL Server 2012 Instance "PANDIAN\SQL2012_1" as Mirror and SQL Server 2016 Instance "PANDIAN\SQL2016" as Witness.

Why I am using Same Version of SQL Server for Principal and Mirror ? let me tell you later

Let's change the SQL management studio session in "SQLCMD Mode" (Query menu --> SQLCMD Mode)

1. To Create a Sample database in Principal Instance

:CONNECT PANDIAN\SQL2012

CREATE DATABASE PrimaryDB1

GO

Connecting to PANDIAN\SQL2012...

Disconnecting connection from PANDIAN\SQL2012...

2. Creating sample table and records

:CONNECT PANDIAN\SQL2012

USE PrimaryDB1

GO

CREATE TABLE TABLE1(ID INT IDENTITY(1,1), NAMES VARCHAR(10))

GO

INSERT TABLE1(NAMES) VALUES('SQL'),('Server'),('SQL Server')

INSERT TABLE1(NAMES) VALUES('Mirroring'),('Failover'),('Testing')

INSERT TABLE1(NAMES) VALUES('Automatic'),('Page'),('Corruption'),('Manual')

GO

Connecting to PANDIAN\SQL2012...

(3 row(s) affected)

(3 row(s) affected)

(4 row(s) affected)

Disconnecting connection from PANDIAN\SQL2012...

3. Sample records from the Table

:CONNECT PANDIAN\SQL2012

USE PrimaryDB1

GO

SELECT * FROM TABLE1

4. Taking FULL Backup from Principal Instance

:CONNECT PANDIAN\SQL2012

BACKUP DATABASE [PrimaryDB1] TO DISK = N'\\PANDIAN\Personal\MirroringPath\PrimaryDB1.Bak'

WITH NOFORMAT, NOINIT, SKIP, NOREWIND, NOUNLOAD, STATS = 10

GO

The shared path should be accessed by Mirror Instance

5. Taking LOG Backup from Principal Instance

:CONNECT PANDIAN\SQL2012

BACKUP LOG [PrimaryDB1] TO DISK = N'\\PANDIAN\Personal\MirroringPath\PrimaryDB1.Trn'

WITH NOFORMAT, NOINIT, SKIP, NOREWIND, NOUNLOAD, STATS = 10

GO

6. Restore the FULL Backup in Mirror Instance

:CONNECT PANDIAN\SQL2012_1

USE [Master]

GO

RESTORE DATABASE [PrimaryDB1] FROM DISK = N'C:\Personal\MirroringPath\PrimaryDB1.Bak'

WITH FILE = 1,

MOVE N'PrimaryDB1' TO N'C:\Personal\DATA\PrimaryDB1.mdf',

MOVE N'PrimaryDB1_log' TO N'C:\Personal\LOG\PrimaryDB1_log.ldf',

NORECOVERY, NOUNLOAD, STATS = 5

GO

The restore should be in NORECOVERY state

7. Restore LOG Backup in Mirror Instance

:CONNECT PANDIAN\SQL2012_1

USE [Master]

GO

RESTORE LOG [PrimaryDB1] FROM DISK = N'C:\Personal\MirroringPath\PrimaryDB1.Trn'

WITH FILE = 1, NORECOVERY, NOUNLOAD, STATS = 10

GO

The LOG backup restore also should be in NORECOVERY state

8. Create ENDPOINT for the Principal Instance

:CONNECT PANDIAN\SQL2012

CREATE ENDPOINT Mirroring

AS TCP (LISTENER_PORT=5022)

FOR DATA_MIRRORING (ROLE=PARTNER,ENCRYPTION=REQUIRED ALGORITHM AES);

9. Give CONNECT Permission to the ENDPOINT for the service account in Principal Instance

:CONNECT PANDIAN\SQL2012

GRANT CONNECT ON ENDPOINT::Mirroring TO [PANDIAN\SQLServerBuddy]

Make sure the SQL Server runs under this service account

10. To make sure the ENDPOINT created

:CONNECT PANDIAN\SQL2012

SELECT serverproperty('servername') [Servername], e.name [Endpoint], e.protocol_desc [Protocol], e.type_desc [Type], e.state_desc [State], e.role_desc [Role], e.encryption_algorithm_desc [ENCRYPTION ALGORITHM],ep.Port [Endpoint Port] FROM SYS.DATABASE_MIRRORING_ENDPOINTS e join SYS.TCP_ENDPOINTS ep ON (e.endpoint_id = ep.endpoint_id)

See, The ENDPOINT not started yet

11. Let me START the ENDPOINT

:CONNECT PANDIAN\SQL2012

ALTER ENDPOINT Mirroring STATE=STARTED;

12. To make sure the ENDPOINT started

:CONNECT PANDIAN\SQL2012

SELECT serverproperty('servername') [Servername], e.name [Endpoint], e.protocol_desc [Protocol], e.type_desc [Type], e.state_desc [State], e.role_desc [Role], e.encryption_algorithm_desc [ENCRYPTION ALGORITHM],ep.Port [Endpoint Port] FROM SYS.DATABASE_MIRRORING_ENDPOINTS e join SYS.TCP_ENDPOINTS ep ON (e.endpoint_id = ep.endpoint_id)

See, The ENDPOINT is started!

13. Create ENDPOINT for the Mirror Instance

:CONNECT PANDIAN\SQL2012_1

CREATE ENDPOINT Mirroring

AS TCP (LISTENER_PORT=5023) FOR DATA_MIRRORING (ROLE=PARTNER,ENCRYPTION=REQUIRED ALGORITHM AES);

14. Give CONNECT Permission to the ENDPOINT for the service account in Mirror Instance

:CONNECT PANDIAN\SQL2012_1

GRANT CONNECT ON ENDPOINT::Mirroring TO [PANDIAN\SQLServerBuddy]

Make sure the SQL Server runs under this service account

15. Let me START the ENDPOINT

:CONNECT PANDIAN\SQL2012_1

ALTER ENDPOINT Mirroring STATE=STARTED;

16. To make sure the ENDPOINT created and started

:CONNECT PANDIAN\SQL2012_1

SELECT serverproperty('servername') [Servername], e.name [Endpoint], e.protocol_desc [Protocol], e.type_desc [Type], e.state_desc [State], e.role_desc [Role], e.encryption_algorithm_desc [ENCRYPTION ALGORITHM],ep.Port [Endpoint Port] FROM SYS.DATABASE_MIRRORING_ENDPOINTS e join SYS.TCP_ENDPOINTS ep ON (e.endpoint_id = ep.endpoint_id)

17. Create ENDPOINT for the Witness Instance

CREATE ENDPOINT Mirroring

AS TCP (LISTENER_PORT=5023) FOR DATA_MIRRORING (ROLE=PARTNER,ENCRYPTION=REQUIRED ALGORITHM AES);

14. Give CONNECT Permission to the ENDPOINT for the service account in Mirror Instance

:CONNECT PANDIAN\SQL2012_1

GRANT CONNECT ON ENDPOINT::Mirroring TO [PANDIAN\SQLServerBuddy]

Make sure the SQL Server runs under this service account

15. Let me START the ENDPOINT

:CONNECT PANDIAN\SQL2012_1

ALTER ENDPOINT Mirroring STATE=STARTED;

16. To make sure the ENDPOINT created and started

:CONNECT PANDIAN\SQL2012_1

SELECT serverproperty('servername') [Servername], e.name [Endpoint], e.protocol_desc [Protocol], e.type_desc [Type], e.state_desc [State], e.role_desc [Role], e.encryption_algorithm_desc [ENCRYPTION ALGORITHM],ep.Port [Endpoint Port] FROM SYS.DATABASE_MIRRORING_ENDPOINTS e join SYS.TCP_ENDPOINTS ep ON (e.endpoint_id = ep.endpoint_id)

17. Create ENDPOINT for the Witness Instance

:CONNECT PANDIAN\SQL2016

CREATE ENDPOINT Mirroring

AS TCP (LISTENER_PORT=5024) FOR DATA_MIRRORING (ROLE=WITNESS,ENCRYPTION=REQUIRED ALGORITHM AES);

18. Give CONNECT Permission to the ENDPOINT for the service account in Witness Instance

:CONNECT PANDIAN\SQL2016

GRANT CONNECT ON ENDPOINT::Mirroring TO [PANDIAN\SQLServerBuddy]

Make sure the SQL Server runs under this service account

19. Let me START the ENDPOINT

:CONNECT PANDIAN\SQL2016

ALTER ENDPOINT Mirroring STATE=STARTED;

20. To make sure the ENDPOINT created and started

:CONNECT PANDIAN\SQL2016

SELECT serverproperty('servername') [Servername], e.name [Endpoint], e.protocol_desc [Protocol], e.type_desc [Type], e.state_desc [State], e.role_desc [Role], e.encryption_algorithm_desc [ENCRYPTION ALGORITHM],ep.Port [Endpoint Port] FROM SYS.DATABASE_MIRRORING_ENDPOINTS e join SYS.TCP_ENDPOINTS ep ON (e.endpoint_id = ep.endpoint_id)

21. To make Principal Instance as a Partner of Mirror Instance

:CONNECT PANDIAN\SQL2012_1

ALTER DATABASE [PrimaryDB1] SET PARTNER ='TCP://PANDIAN:5022'

Connecting to PANDIAN\SQL2012_1...

Disconnecting connection from PANDIAN\SQL2012_1...

22. To make Mirror Instance as a Partner of Principal Instance

:CONNECT PANDIAN\SQL2012

ALTER DATABASE [PrimaryDB1] SET PARTNER ='TCP://PANDIAN:5023'

Connecting to PANDIAN\SQL2012...

Disconnecting connection from PANDIAN\SQL2012...

23. To make Witness Instance as a Witness of Principal Instance

:CONNECT PANDIAN\SQL2012

ALTER DATABASE [PrimaryDB1] SET WITNESS ='TCP://PANDIAN:5024'

Connecting to PANDIAN\SQL2012...

Disconnecting connection from PANDIAN\SQL2012...

It's done.....

24. Let me check the Principal Database and Is in sync

25. Let me check the Mirror Database and Is in sync

So, Both the Databases - Principal and Mirror are in Sync now! Everything will be propagated from the Principal Database to Mirror databases.

26. Let me check the Database Mirroring Monitor and see how it goes..

Expand Principal "Databases" --> Right Click on the PrimaryDB1 --> Click Tasks --> Click "Launch Database Mirroring Monitor"

Everything looks Good!

See,

PANDIAN\SQL2012 acts as a Principal

PANDIAN\SQL2012_1 acts as a Mirror

and, Witness is watching from "TCP://PANDIAN:5024" which means "PANDIAN\SWL2016"

What will happen If anything goes wrong with Principal "PANDIAN\SQL2012" ? Or We manually failover it ?

In that case, Mirror instance should become as Principal and Current Principal will be act as a Mirror. Right ?

Let me Failover the Principal manually now and see

27. Manually failover the Principal

:CONNECT PANDIAN\SQL2012

USE [Master]

GO

ALTER DATABASE PrimaryDB1 SET PARTNER FAILOVER

As per MSDN - On the former principal, clients are disconnected from the database and in-flight transactions are rolled back

See,

PANDIAN\SQL2012_1 acts as a Principal

PANDIAN\SQL2012 becomes as Mirror

CREATE ENDPOINT Mirroring

AS TCP (LISTENER_PORT=5024) FOR DATA_MIRRORING (ROLE=WITNESS,ENCRYPTION=REQUIRED ALGORITHM AES);

18. Give CONNECT Permission to the ENDPOINT for the service account in Witness Instance

:CONNECT PANDIAN\SQL2016

GRANT CONNECT ON ENDPOINT::Mirroring TO [PANDIAN\SQLServerBuddy]

Make sure the SQL Server runs under this service account

19. Let me START the ENDPOINT

:CONNECT PANDIAN\SQL2016

ALTER ENDPOINT Mirroring STATE=STARTED;

20. To make sure the ENDPOINT created and started

:CONNECT PANDIAN\SQL2016

SELECT serverproperty('servername') [Servername], e.name [Endpoint], e.protocol_desc [Protocol], e.type_desc [Type], e.state_desc [State], e.role_desc [Role], e.encryption_algorithm_desc [ENCRYPTION ALGORITHM],ep.Port [Endpoint Port] FROM SYS.DATABASE_MIRRORING_ENDPOINTS e join SYS.TCP_ENDPOINTS ep ON (e.endpoint_id = ep.endpoint_id)

21. To make Principal Instance as a Partner of Mirror Instance

:CONNECT PANDIAN\SQL2012_1

ALTER DATABASE [PrimaryDB1] SET PARTNER ='TCP://PANDIAN:5022'

Connecting to PANDIAN\SQL2012_1...

Disconnecting connection from PANDIAN\SQL2012_1...

22. To make Mirror Instance as a Partner of Principal Instance

:CONNECT PANDIAN\SQL2012

ALTER DATABASE [PrimaryDB1] SET PARTNER ='TCP://PANDIAN:5023'

Connecting to PANDIAN\SQL2012...

Disconnecting connection from PANDIAN\SQL2012...

23. To make Witness Instance as a Witness of Principal Instance

:CONNECT PANDIAN\SQL2012

ALTER DATABASE [PrimaryDB1] SET WITNESS ='TCP://PANDIAN:5024'

Disconnecting connection from PANDIAN\SQL2012...

24. Let me check the Principal Database and Is in sync

25. Let me check the Mirror Database and Is in sync

So, Both the Databases - Principal and Mirror are in Sync now! Everything will be propagated from the Principal Database to Mirror databases.

26. Let me check the Database Mirroring Monitor and see how it goes..

Expand Principal "Databases" --> Right Click on the PrimaryDB1 --> Click Tasks --> Click "Launch Database Mirroring Monitor"

Everything looks Good!

See,

PANDIAN\SQL2012 acts as a Principal

PANDIAN\SQL2012_1 acts as a Mirror

and, Witness is watching from "TCP://PANDIAN:5024" which means "PANDIAN\SWL2016"

What will happen If anything goes wrong with Principal "PANDIAN\SQL2012" ? Or We manually failover it ?

In that case, Mirror instance should become as Principal and Current Principal will be act as a Mirror. Right ?

Let me Failover the Principal manually now and see

27. Manually failover the Principal

:CONNECT PANDIAN\SQL2012

USE [Master]

GO

ALTER DATABASE PrimaryDB1 SET PARTNER FAILOVER

Connecting to PANDIAN\SQL2012...

Disconnecting connection from PANDIAN\SQL2012...

28. Let me check the Database Mirroring Monitor now

Disconnecting connection from PANDIAN\SQL2012...

28. Let me check the Database Mirroring Monitor now

As per MSDN - On the former principal, clients are disconnected from the database and in-flight transactions are rolled back

See,

PANDIAN\SQL2012_1 acts as a Principal

PANDIAN\SQL2012 becomes as Mirror

Continues...