sp_executesql is recommended over using the EXEC statement to execute a string of query because of the below reasons

1. Self-contained batches

Statements in sp_executesql or EXEC are not compiled to an execution plan until its executed (Not parsed/checked for errors)

DECLARE @hai VARCHAR(5)

SET @hai = 'HAI'

EXEC sp_executesql N'PRINT @hai'

GO

--Or

DECLARE @hai VARCHAR(5)

SET @hai = 'HAI'

EXEC ('PRINT @hai')

GO

The above both block fails - The variable @hai become out of scope/boundary

Msg 137, Level 15, State 2, Line 1

Must declare the scalar variable "@hai".

2. Substituting Parameter values

sp_executesql supports the substitution of parameter values for any parameters specified in the Transact-SQL string, but the EXEC statement does not.

The SQL Server query optimizer will probably match the Transact-SQL statements from sp_executesql with execution plans from the previously executed statements, saving the overhead of compiling a new execution plan

With the EXEC statement, all parameter values must be converted to character or Unicode and made a part of the Transact-SQL string

USE [AdventureWorks2012]

GO

DECLARE @Query VARCHAR(100), @Param1 INT

SET @Param1 = 12742

SET @Query = 'SELECT * FROM Person.[Address] WITH(NOLOCK) WHERE AddressID =' + CAST(@Param1 AS VARCHAR)

EXEC(@Query)

A completely new Transact-SQL string must be built for each execution, even when the only differences are in the values supplied for the parameters. This generates extra overhead in the following several ways:

- The ability of the SQL Server query optimizer to match the new Transact-SQL string with an existing execution plan is hampered by the constantly changing parameter values in the text of the string, especially in complex Transact-SQL

statements.

- The entire string must be rebuilt for each execution.

- Parameter values (other than character or Unicode values) must be cast to a character or Unicode format for each execution

sp_executesql supports the setting of parameter values separately from the Transact-SQL string

USE [AdventureWorks2012]

GO

DECLARE @Query NVARCHAR(100), @LParam1 INT

SET @LParam1 = 12742

SET @Query = N'SELECT * FROM [AdventureWorks2012].Person.[Address] WITH(NOLOCK) WHERE AddressID =@Param1'

EXEC sp_executesql @Query,N'@Param1 Int', @Param1 = @LParam1

- Because the actual text of the Transact-SQL statement does not change between executions, the query optimizer should match the Transact-SQL statement in the second execution with the execution plan generated for the first execution.

- Therefore, SQL Server does not have to compile the second statement.

- The Transact-SQL string is built only once.

- The integer parameter is specified in its native format. Conversion to Unicode is not required here.

Note: Object names in the statement string must be fully qualified (i.e: Database.Schema.Objectname) in order for SQL Server to reuse the execution plan.

3. Re-using execution plan

Using sp_executesql can help reduce the overhead while still allowing SQL Server to reuse execution plans.

sp_executesql can be used instead of stored procedures when executing a Transact-SQL statement a number of times, when the only variation is in the parameter values supplied to the Transact-SQL statement. Because the Transact-SQL statements themselves remain constant and only the parameter values change, the SQL Server query optimizer is likely to reuse the execution plan it generates for the first execution!

/*EXEC*/

USE [AdventureWorks2012]

GO

DECLARE @Param1 VARCHAR(10)

SET @Param1 = 'Gail'

EXEC ('SELECT p.FirstName, pp.PhoneNumber FROM Person.Person p WITH(NOLOCK)

JOIN Person.PersonPhone pp WITH(NOLOCK)

ON (p.BusinessEntityID = pp.BusinessEntityID)

WHERE p.LastName = '''+ @Param1 + '''')

GO

The above statement executed 3 Times with various values(Goel, Gigi, Gail)

Check the execution plan generated (3 Separate Execution plans generated for every run based on the parameter value changes)

/*SP_EXECUTESQL*/

USE [AdventureWorks2012]

GO

DECLARE @Sql nvarchar(500)

SET @Sql = N'SELECT p.FirstName, pp.PhoneNumber FROM Person.Person p WITH(NOLOCK)

JOIN Person.PersonPhone pp WITH(NOLOCK)

ON (p.BusinessEntityID = pp.BusinessEntityID)

WHERE p.LastName = @LastName';

DECLARE @ParamDefinition nvarchar(25) = N'@LastName Varchar(10)'

DECLARE @lLastName Varchar(10)

SET @lLastName='Gail'

EXEC sp_executesql @Sql, @ParamDefinition,

@LastName=@lLastName;

GO

Check the execution plan generated as above(Only 1 execution plan generated and Re-used for all the subsequent runs irrespective of it's parameter value - See - Use Count column as 3)

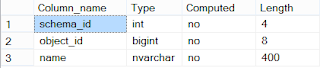

Here is the script used for the above screenshots:

WITH XMLNAMESPACES

(DEFAULT 'http://schemas.microsoft.com/sqlserver/2004/07/showplan')

SELECT

cp.usecounts [Use Count],

cp.objtype,

c.value('(QueryPlan/@CompileTime)[1]', 'int') [CompileTime(ms)],

c.value('(QueryPlan/@CompileCPU)[1]', 'int') [CompileCPU(ms)],

c.value('(QueryPlan/@CompileMemory)[1]', 'int') [CompileMemory(KB)],

LEFT(x.[text],50) + '...' + RIGHT(x.[text],10) [Plan Text]

FROM sys.dm_exec_cached_plans AS cp CROSS APPLY sys.dm_exec_sql_text(cp.plan_handle) AS X CROSS APPLY sys.dm_exec_query_plan(cp.plan_handle) AS qp

CROSS APPLY qp.query_plan.nodes('ShowPlanXML/BatchSequence/Batch/Statements/StmtSimple') AS n(c)

WHERE x.[text] LIKE '%SELECT p.FirstName%' AND x.[text] NOT LIKE '%sys.dm_exec_cached_plans%'